Theme 01

Adaptive Perception

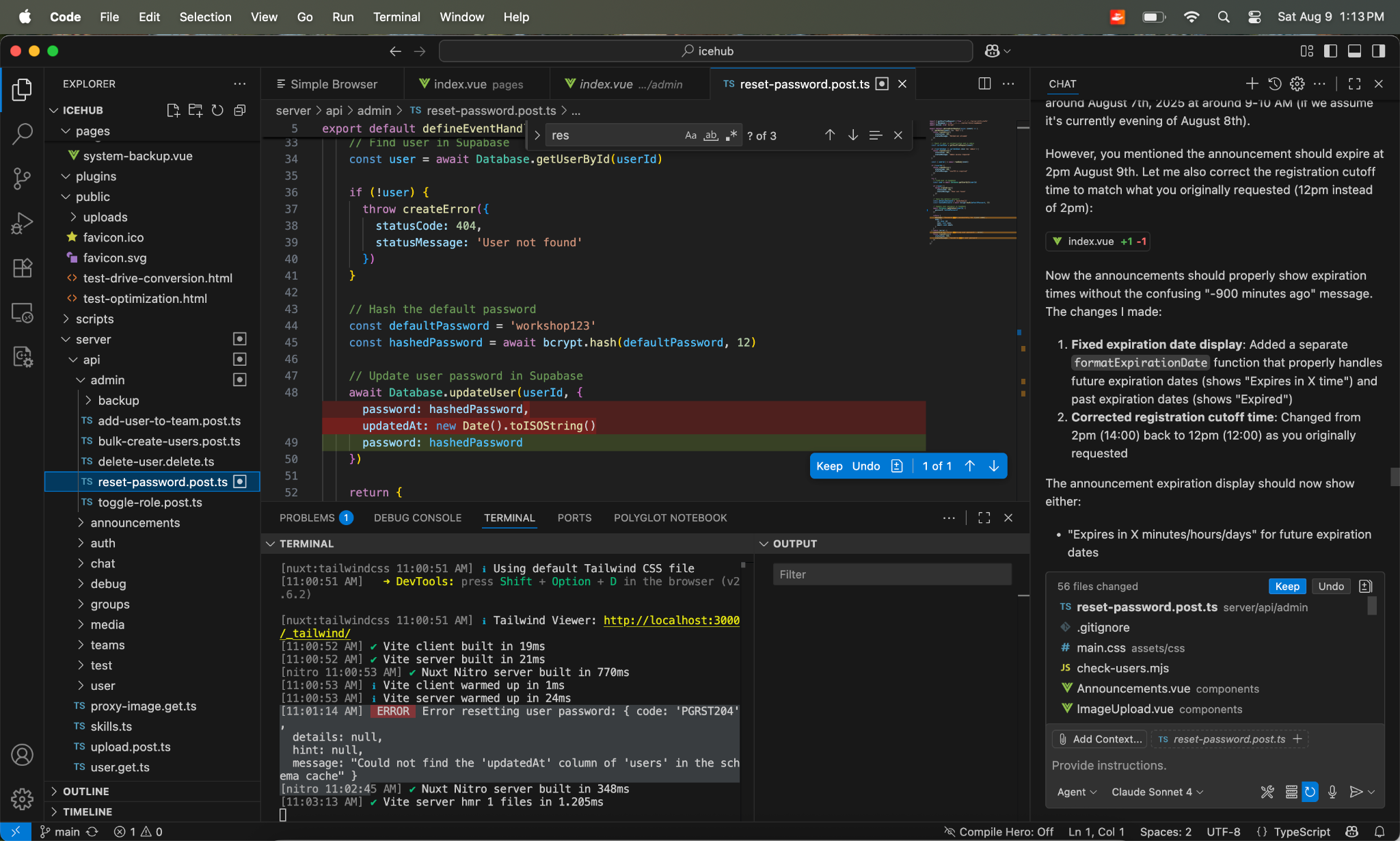

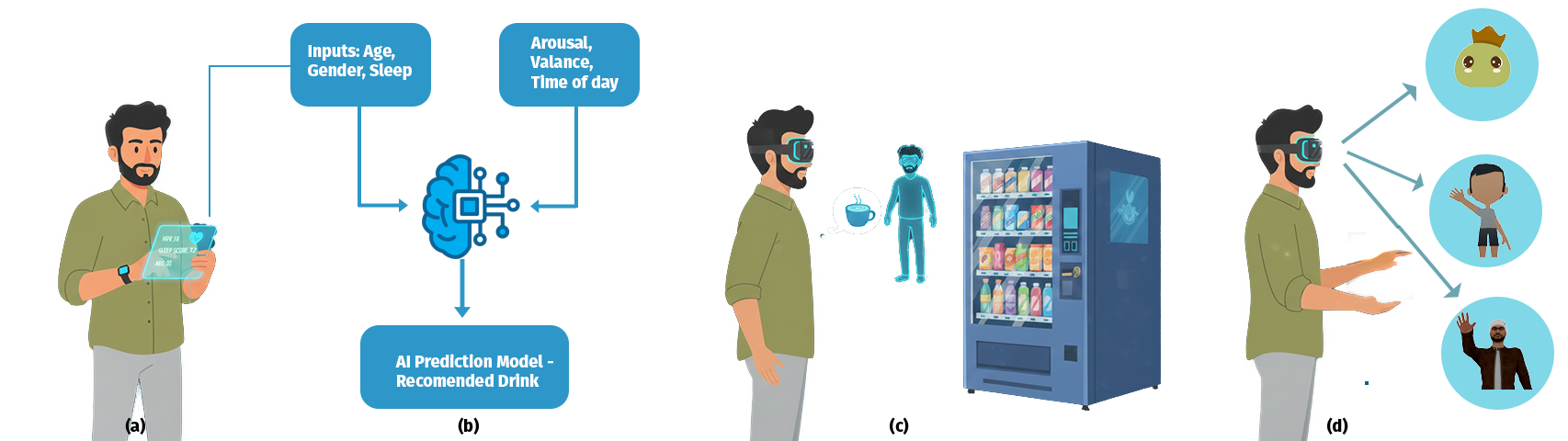

How environments can respond to attention, task demands, and changing user state. We study perception as a dynamic loop between sensing, inference, and interface adaptation.

Adaptive XR systems can modulate pacing, atmosphere, and guidance based on how perception changes over time.