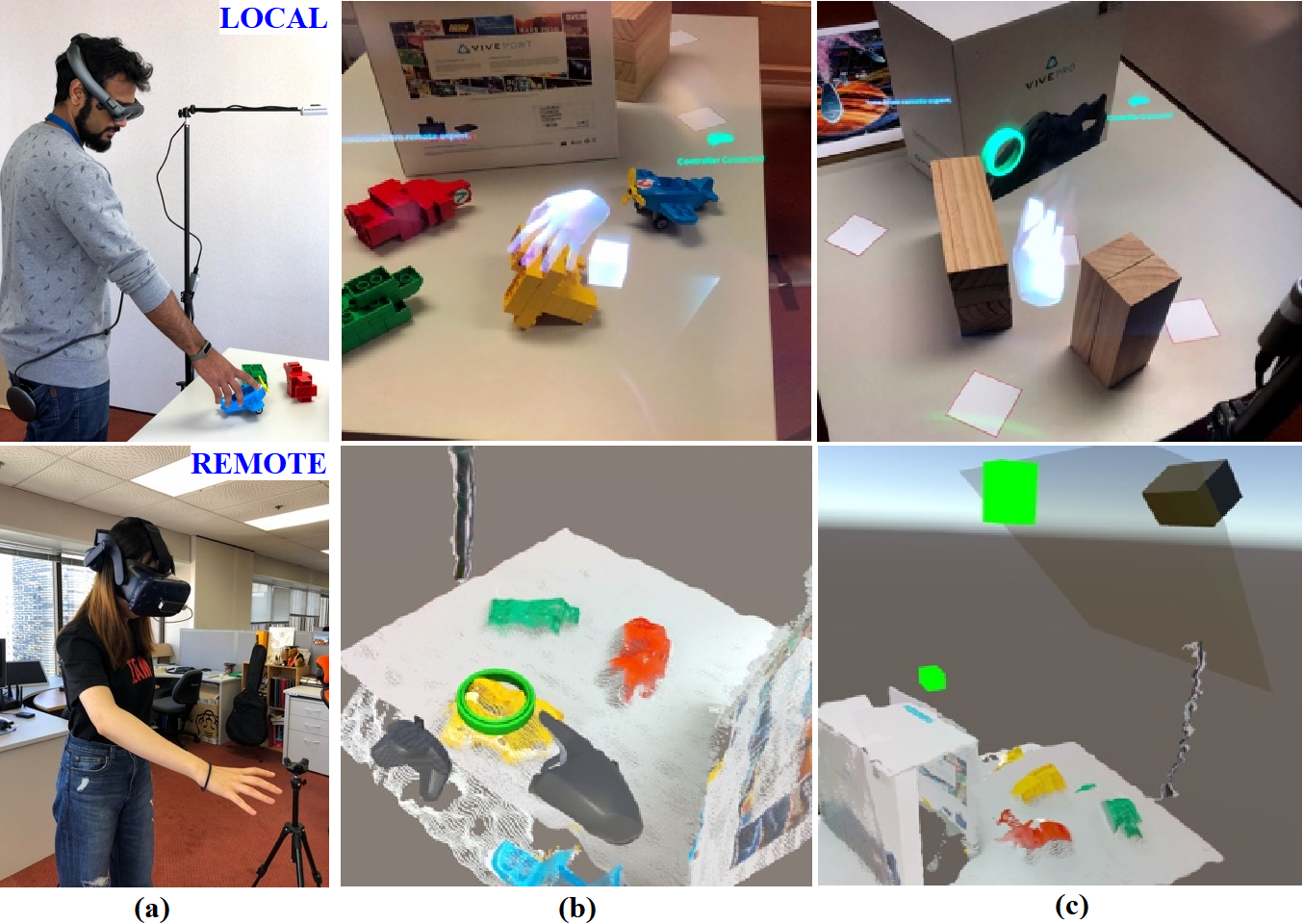

Shared Perception is a mixed reality framework that enables people to collaborate by sharing not just information, but what they see, attend to, and do in space. Instead of relying on verbal descriptions or screen-based communication, the system allows users to interact within a shared 3D context using natural cues like gaze and gesture.

The core idea is simple: collaboration becomes more effective when people operate within the same perceptual frame.

Research Context

This work builds on research in mixed reality remote collaboration and social VR. In particular, our prior systems explored how combining 3D scene sharing with eye gaze and hand gestures can improve communication between remote users.

In parallel, research on social interaction in VR shows that gaze plays a critical role in how people align with each other, not just behaviorally, but even at a neural level, influencing inter-brain synchrony during collaboration.

Together, these directions point to a broader question: can systems enable people to not just communicate, but perceive together?

How It Works

Shared Perception systems combine three key components:

- Spatial sharing: A live 3D representation of the environment allows remote users to observe and navigate the same space.

- Gaze as attention: Eye gaze is visualized to indicate focus, intent, and awareness in real time.

- Gesture as action: Hand gestures provide direct, spatial guidance for interaction, placement, and manipulation.

These signals are aligned in a shared coordinate system, allowing users to feel co-located, even when physically distant.

Key Findings

Our studies show that sharing perceptual cues significantly improves collaboration compared to verbal communication alone.

- Combining gaze and gesture leads to faster task completion and more efficient guidance

- Users report a stronger sense of co-presence and shared understanding

- Visual cues reduce cognitive load by removing the need for verbal translation of spatial information

Importantly, different cues play different roles: gaze helps establish attention, while gesture supports action. Together, they create a more complete interaction loop.

Why It Matters

Most remote systems focus on transmitting information. Shared Perception focuses on aligning experience.

By making attention and action visible, the system enables:

- More intuitive collaboration

- Fewer misunderstandings

- A stronger sense of working together in the same space

This shifts remote interaction from explanation to demonstration.

Broader Implications

Shared Perception opens up new possibilities across domains:

- Remote assistance, where experts guide tasks directly in context

- Training and education, where learning happens through shared attention and demonstration

- Collaborative design, where teams think and act within the same spatial environment

Beyond performance, these systems also hint at deeper alignment, where collaborators are not just coordinated, but in sync.

Next Directions

Current work points toward integrating Shared Perception with adaptive systems that respond to user state.

Future directions include:

- Adaptive guidance based on attention and behavior

- Integration with physiological sensing

- Long-term studies of collaboration quality and learning

For FlowsXR, Shared Perception represents a foundational layer, enabling systems that do not just connect people, but align how they perceive and act together.