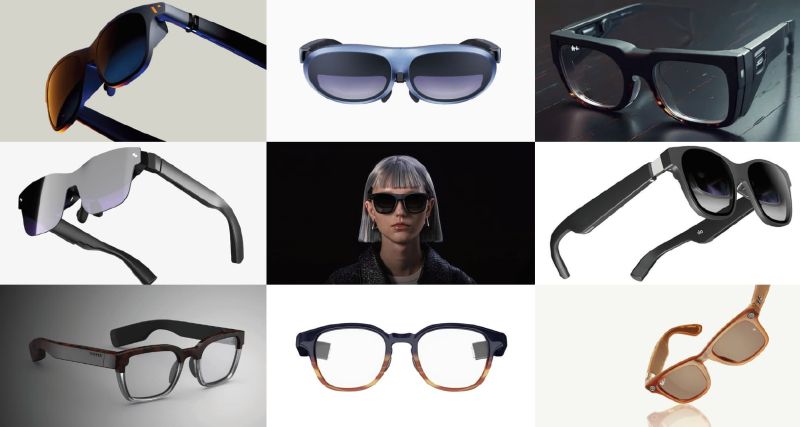

Smartglasses have quietly become one of the most exciting product categories in tech. We tracked 53 commercially available devices and 15 SDKs across four tiers — from sub-50g audio-first frames (led by Ray-Ban Meta and a wave of Chinese manufacturers like Rokid and Xiaomi) to HUD glasses with Micro-LED waveguide displays, USB-C tethered cinema glasses, and bleeding-edge 6-DoF spatial AR from Google, Snap, and Meta. Over 70% now ship with AI built in, and the consumer sweet spot sits around $300–$600.

On the developer side, HUD SDKs like ActiveLook and Even Hub use phone-hosted BLE architectures for lightweight companion apps, while spatial platforms like Android XR and Snap Lens Studio enable full 3D AR with Unity and Jetpack Compose. The experience varies widely — from open-source repos to gated enterprise programs — but the tooling is maturing fast.

For us at FlowsXR, this convergence of lightweight hardware, capable SDKs, and mainstream AI is opening real opportunities: enterprise training on HUD glasses instead of headsets, spatial storytelling on 6-DoF platforms, and accessible experiences through audio-first and caption-enabled frames. The hardware is finally catching up to the vision.