Physiology-Informed Recommendations in Mixed Reality

EmoDrink is a mixed reality research framework for turning physiological and contextual data into everyday wellbeing recommendations. Rather than showing heart-rate or sleep metrics as dashboards and scores, the system translates those signals into a concrete suggestion in a familiar decision context: choosing a drink from a vending machine.

The project focuses less on whether a recommendation engine can produce a result and more on how that result should be communicated. EmoDrink asks a sharper interaction-design question: how does embodiment change the way people interpret, trust, and act on the same wellbeing advice?

Research Context

The paper, EmoDrink: An Embodied MR Framework for Physiology-Informed Beverage Recommendations, positions the work at the intersection of personal informatics, physiological sensing, recommender systems, and embodied interaction. The motivation is straightforward: wearables increasingly capture meaningful indicators of stress, recovery, and affect, but they often leave users with metrics that are hard to interpret in the moment.

EmoDrink addresses that translation problem by using mixed reality to externalize the recommendation through embodied representations instead of raw dashboards. The system illustrates how the same underlying recommendation can feel different depending on whether it is presented as an abstract visual form, a generic humanoid agent, or a personalized avatar.

How EmoDrink Works

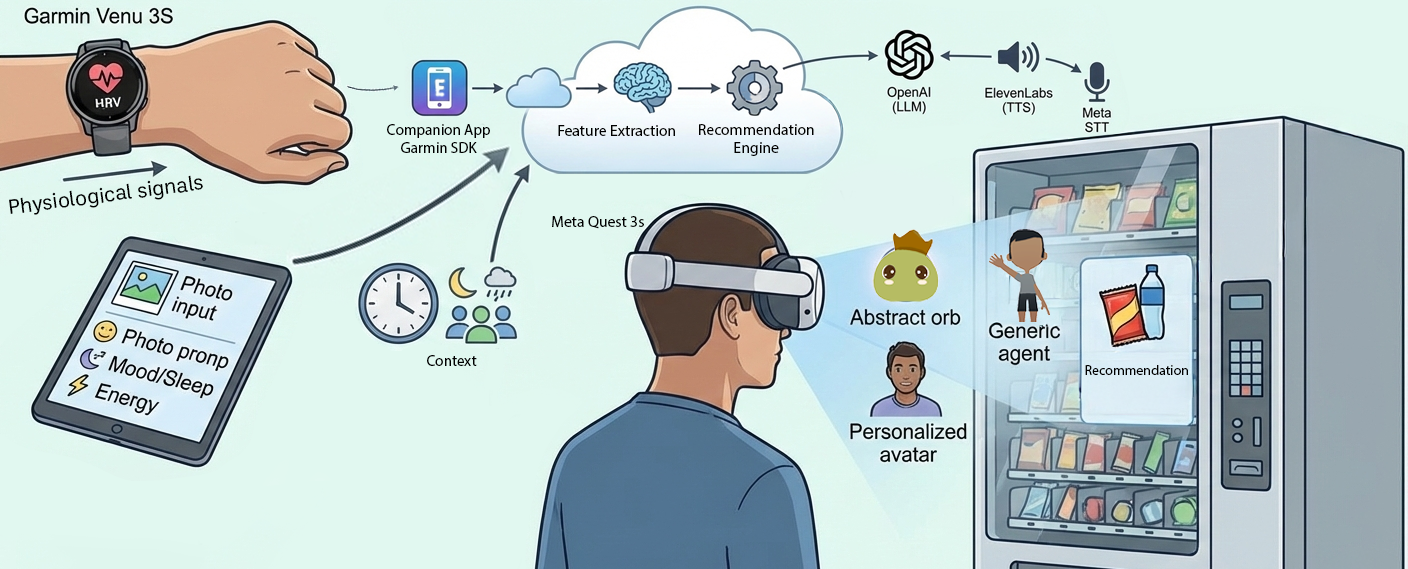

The experience combines four sources of input:

- physiological sensing from a Garmin Venu 3S smartwatch

- short self-reports about mood, sleep quality, and energy

- contextual cues

- a recommendation backend that generates a single suggestion and rationale

At the start of a session, the participant wears the watch and remains relatively still for about a minute so the system can capture a short HRV window representing current physiological state. Those measurements are streamed to a backend and combined with the self-reported inputs.

The system then maps the incoming data to a coarse arousal-valence model rather than claiming precise emotion labels. That choice is important to the project: the framework is intentionally suggestive rather than diagnostic, and it is designed to support reflection rather than overstate confidence.

Embodiment as the Variable

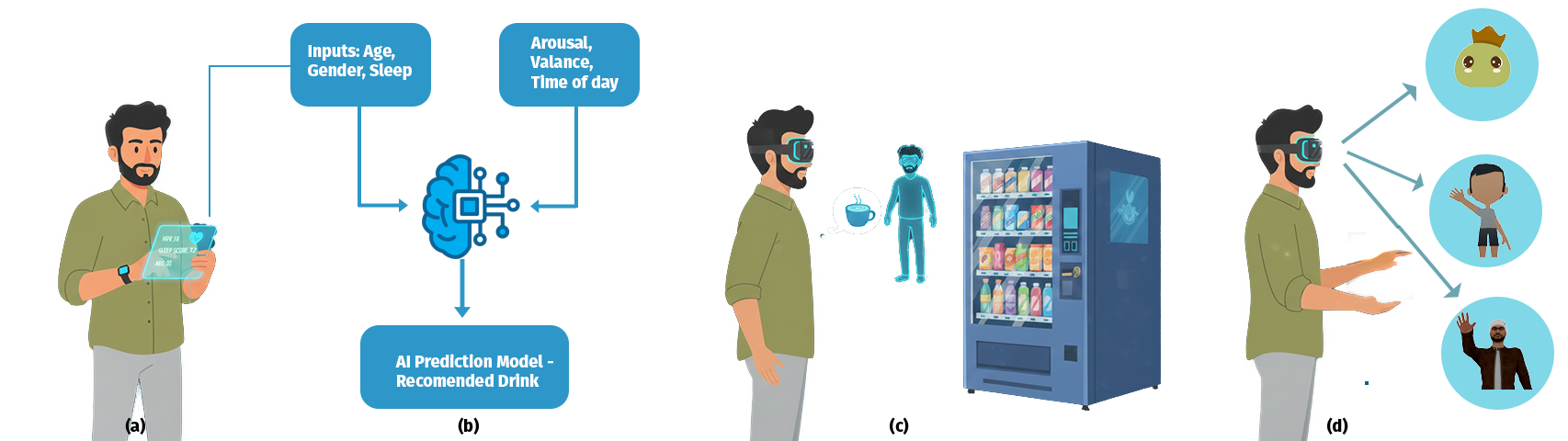

EmoDrink keeps the recommendation itself constant while changing only the form in which it is delivered. The paper describes three presentation strategies:

- Abstract visualization using a minimal data orb

- Generic agent using a stylized humanoid embodiment

- Personalized avatar using a user-specific future-self representation created from tablet photo capture

Each mode presents the same suggested drink and the same rationale. This lets the system isolate embodiment as the primary design variable and examine how representation changes trust, clarity, comfort, and perceived actionability.

The Vending-Machine Scenario

The recommendation is staged through a virtual vending machine. This is a strong choice because beverage selection is familiar, low-stakes, and easy to understand without explanation. Users do not need to learn a domain-specific task before reacting to the recommendation itself.

Drinks are selected from a curated database of more than 40 items, organized by functional properties such as calming or hydrating. The result is a scenario that feels concrete enough to support action, but neutral enough to keep attention on the communication design rather than the novelty of the task.

Demo Flow

The CHI interactivity experience is designed as a short walk-up-and-use flow lasting about five to seven minutes:

- onboarding with photo capture, physiological sensing, and self-report input

- calibration and entry into the mixed reality vending-machine scene

- within-subject comparison across the three embodiment modes

- short reflection, optional revisits, and post-experience feedback

The transitions between modalities are system-orchestrated so users can focus on the perceptual differences instead of learning separate interaction patterns for each representation.

EmoDrink demo flow